Recently, I faced a challenge while helping a customer deploy their SIEM solution on Oracle Cloud Infrastructure (OCI). The customer provided an ISO installer, which is not a natively

supported format for OCI custom images.

OCI currently supports importing custom images in VMDK, QCOW2, and OCI image formats. As of December 2025, the maximum supported image size is 400 GB (this limit may change in the future). You can find additional details about importing custom Linux images in the OCI

documentation .

Given these constraints, I followed the approach below to successfully deploy IBM QRadar Console on OCI.

- Install VirtualBox on a compute VM running on OCI

- Install Oracle VirtualBox Extension Pack and configure OCI integration

- Create a VirtualBox VM using the QRadar ISO

- Import the VM disk (VMDK) as a custom image in OCI

- Launch a VM with the desired compute, storage, and networking

- Extend storage using LVM

- Configure QRadar networking

1 Installing VirtualBox on a compute VM in OCI is straightforward. I downloaded VirtualBox for my platform. During installation, I was prompted to install the latest Microsoft Visual C++ redistributable package, which is required.

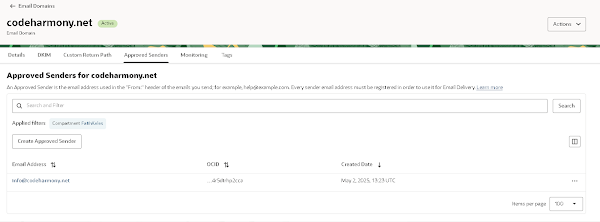

2 Next, I installed the VirtualBox Extension Pack . Once enabled, I configured a Cloud Profile using OCI API Keys .This allows VirtualBox to push custom images directly to OCI.

This step is critical. Uploading the VMDK manually to OCI Object Storage and creating a custom image using generic parameters did not work.

VirtualBox Cloud Integration automatically selects image launch parameters that allow the VM to boot successfully in OCI.

3 The QRadar installation is heavily automated using the Anaconda installer. It performs hundreds of steps and multiple reboots. Below are only the important and non-obvious steps.

a Uncheck “Proceed with Unattended Installation.”

If unattended installation is enabled, the VM fails to start with errors similar to the following:

VERR_ALREADY_EXISTS - Error setting name '/ks.cfg'

PIIX3 cannot attach drive to the Primary Master

Power up failed (VERR_ALREADY_EXISTS)

This happens because QRadar already includes its own kickstart configuration.

bQRadar is resource-intensive. If minimum requirements are not met, the installer will not show the

“All-in-One Console” option. Minimum requirements:

- CPU: 4 vCPUs

- Memory: 16 GB

c Configure Disk Size and Format:

- Minimum disk size: 256 GB

- VirtualBox does not pre-allocate disk space unless specified

- This disk becomes the OCI boot volume later, so keep it minimal and resize cautiously

- Select VMDK as the disk format (useful if manual copy is required)

d I started the VM in GUI mode and followed the on-screen instructions. Most steps are automated. At one point, the installer prompted for user input. I selected

FLATTEN and continued.

e Later, when prompted again, I typed

HALT to stop the VM.

f While the VM was stopped, removed the optical drive (ISO) and restarted the VM.

g The scripted installation resumed and takes time to complete.

h Eventually prompted for login, I logged in as

root.

i Scrolled through the license agreement (Space key) and typed

yes to accept.

j Installation continues with

Software Install

k It will not display

All-In-One Console option if the VM has less than recommended minimums

l Installation continues with

normal setup (not HA),

date time and

time zone settings,

ipv4 and

emp0s3 for NIC and networking. QRadar uses static IP configuration. I used a generic OCI VCN configuration (10.0.0.0/16), this can be configured anytime later. I re-configured it using VNC connection after provisioning the VM on OCI.

m Later I set the

admin password (used for qradar web console) and

root password (will be used for ssh)

n Installation is complete and QRadar web console is running on localhost:443

4 Initially, I tried uploading the VMDK manually to Object Storage and creating a custom image using PARAVIRTUALIZED mode. Unfortunately, the VM failed to boot with dracut errors. So I allowed VirtualBox to decide on the required parameters by using Export to OCI feature.

a Select the VM in VirtualBox

b I prefered to create the image and later provision the instance manually, but VirtualBox can also do this for you.

c Select the object storage bucket

d And VirtualBox will first upload VMDK file to object storage and then create custom image. It might take sometime.

e Once completed you can see the file in object storage and custom image.

Important The resulting image uses:

- BIOS firmware

- IDE boot disk

- E1000 network adapter

IDE and BIOS combination limits the boot volume size to ~2 TiB. Any resize operation beyond this limit corrupts the boot sequence. Data is still present, but the VM will not boot.

5 I have launched a VM instance using the custom image. During my tests allocating more than 2TiB for boot volume the instance boot will failed. As explained here , I connected to instance serial console with VNC viewer. Entered root password and ready to complete configuration.

6 As you see, 2TiB storage is there but not allocated. First I fixed this.

a First format disk and add new partition to allocate all available storage

b Now initialize physical volume and extend volume group by adding new volume, then reboot.

c Now I can see

/store has the available space increased.

7 And the final stage is to re-configure qradar network with qchange_netsetup. Update the private IP with the one assigned from subnet.

The script got stuck so after waiting for a while, I restarted the server. Next time I ran

qchange_netsetup I saw error message about there are pending changes, so I deployed them by using

/opt/qradar/upgrade/util/setup/upgrades/do_deploy.pl

After configuring security list and/or network security group (NSG) in my VCN I am able to ssh into the server, also can login to QRadar console using my browser.

Important Notes

- I tried importing VMDK myself, using most generic parameters, unfortunately the VM didn't boot. Once I imported custom image using VirtualBox Cloud Extensions I saw it is using very specific and older technology like E1000 for NIC attachment, BIOS firmware and IDE for boot volume. Last two combination is limiting boot volume size at 2 TiB. I tried resizing the boot volume both offline and online but the VM didn't boot after resize.

- QRadar has a specific storage arrangement, and will require most space for /store partition. Since I can't exntend boot volume, I decided to attach a block volume as a data disk. This approach worked fine, but how do I enable Qradar to use the new disk? I added new block volume to volume group and extended /store but unfortunately after a restart volume group failed because of SCSI attachment. I guess volume group initilization is part of boot, where attaching network storage and mounting it can only happen after a successful boot. So this approach also didn't work. By the way, I was able to recover VM by editing fstab and removing additonal block volume later

Final Thoughts

This approach works only if you are comfortable with the 2 TiB boot volume limitation. For a SIEM solution that continuously collects logs, this is a serious constraint. One possible workaround is to move data outside and free up some space. I would recommend deploying OCI Image you can obtain from IBM Marketplace. Your on-premise license will work on this image as well. Marketplace deployment uses a secondary disk which can be larger than 2 TiB, but still can not be resized after installation.

See my blog post that covers Marketplace-based deployment approach in detail.